Model Quantization ಅಂದ್ರೆ ಏನು? AI models size ಹೇಗೆ reduce ಮಾಡುತ್ತಾರೆ?

AI models powerful ಆಗಿದ್ದರೂ

ಅವು ತುಂಬಾ heavy (large size) ಆಗಿರುತ್ತವೆ.

ಈ problem solve ಮಾಡಲು use ಮಾಡುವ technique:

Model Quantization

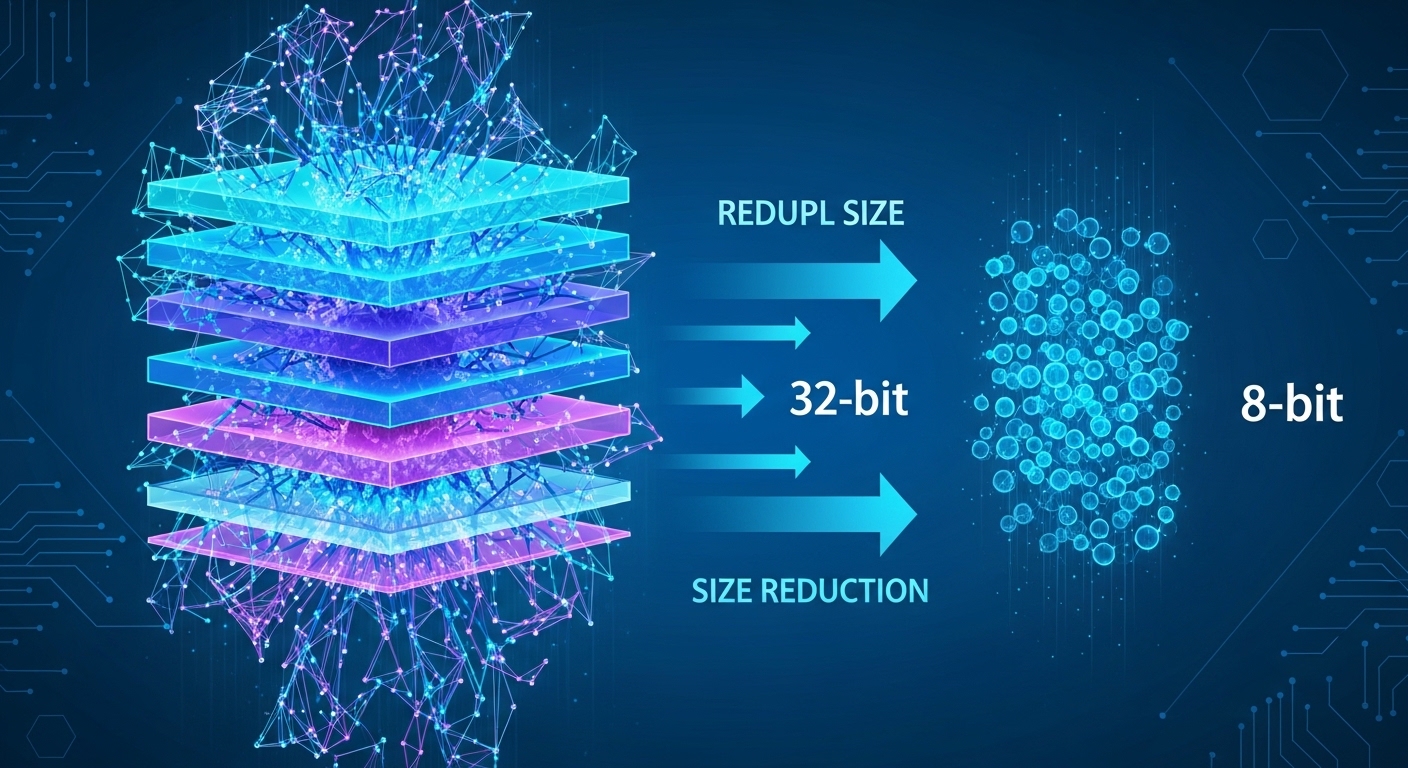

Model Quantization ಅಂದ್ರೆ ಏನು?

Model quantization ಅಂದ್ರೆ:

AI model ನಲ್ಲಿ ಇರುವ numbers (weights) ಅನ್ನು

smaller precision numbers ಆಗಿ convert ಮಾಡುವ process

Simple definition:

Big model → Smaller model → Same performance (almost)

Why quantization important?

Without quantization:

Model size ದೊಡ್ಡದು

Memory usage ಹೆಚ್ಚು

Processing slow

Quantization helps:

Model size reduce

Speed increase

Memory save

Simple example

Before quantization:

Model uses 32-bit numbers

After quantization:

Uses 8-bit numbers

Result:

Model smaller + faster

How it works?

AI model weights normally high precision numbers ಇರುತ್ತವೆ.

Quantization:

Values simplify ಮಾಡುತ್ತದೆ

Precision slightly reduce ಮಾಡುತ್ತದೆ

But performance almost same ಇರಬಹುದು.

Types of quantization

Common methods:

1. Post-training quantization

Training ನಂತರ apply ಮಾಡುವುದು

2. Quantization-aware training

Training ಸಮಯದಲ್ಲೇ apply ಮಾಡುವುದು

Real world usage

Quantization use ಆಗುತ್ತದೆ:

Mobile AI apps

Edge devices

IoT systems

Real-time AI systems

Small devices ನಲ್ಲಿ AI run ಮಾಡಲು

Benefits

Quantization advantages:

Faster inference

Lower memory usage

Lower cost

Better scalability

Trade-off

Small limitation ಇದೆ:

Very slight accuracy drop

But most cases acceptable ಆಗಿರುತ್ತದೆ.

Why companies use it?

Companies want:

Fast AI systems

Low cost deployment

Mobile compatibility

Quantization is key solution

Future of quantization

Future ನಲ್ಲಿ:

Ultra-efficient AI models

Edge AI growth

Real-time AI everywhere

Quantization ತುಂಬಾ important ಆಗುತ್ತದೆ.

Kannada readers ಗೆ takeaway

AI models powerful ಆಗಿದ್ದರೂ

optimization ಇಲ್ಲದೆ practical ಆಗುವುದಿಲ್ಲ

Quantization helps AI go:

From heavy → lightweight → usable